Unit 3 - Notes

Unit 3: Process Synchronization

1. Introduction to Processes

Concurrent Processes

Concurrent processes are processes that execute simultaneously (or virtually simultaneously using time-slicing) in an operating system.

- Independent Processes: Execution of one does not affect the execution of another.

- Co-operating Processes: Execution of one can affect or be affected by other processes executing in the system. These processes share resources (memory, code, or data).

Reasons for allowing process co-operation:

- Information Sharing: Accessing the same file or database.

- Computation Speedup: Breaking tasks into subtasks (requires multicore).

- Modularity: Dividing system functions into separate processes.

Hierarchy of Processes

In most OSs, processes are created by other processes, forming a tree structure.

- Parent Process: The creator.

- Child Process: The new process.

- Unix/Linux uses the

fork()system call to create a child process.

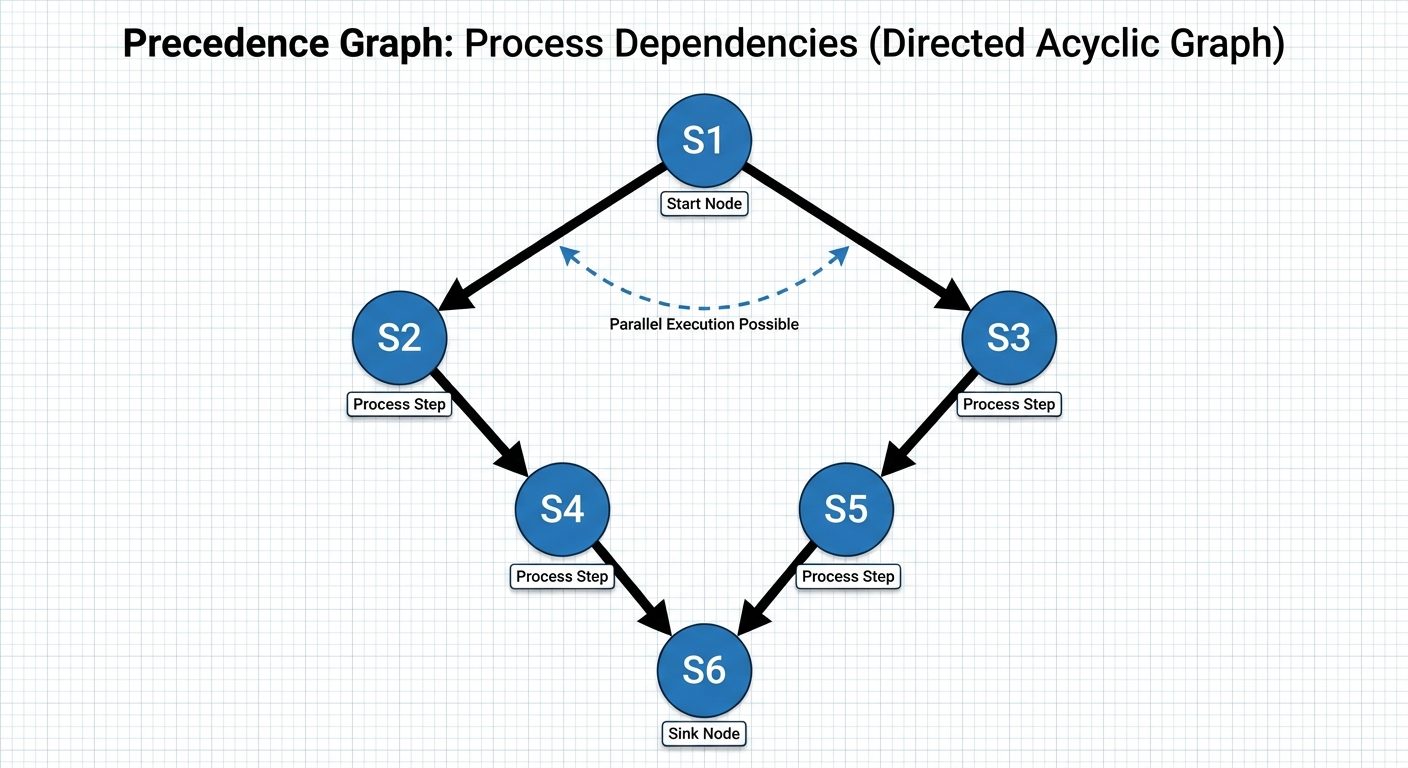

Precedence Graph

A precedence graph is a directed acyclic graph (DAG) used to describe the execution rules and dependencies between concurrent processes.

- Nodes: Represent individual statements or processes ().

- Edges: Represent execution order. An edge from to implies must complete before starts.

2. The Critical Section Problem

The Critical Section Problem is the core issue in process synchronization. Consider a system of processes . Each process has a segment of code called a Critical Section (CS) in which the process changes common variables, updates a table, or writes to a file.

Goal: Ensure that when one process is in its Critical Section, no other process is allowed to execute in its Critical Section (Mutual Exclusion).

General Structure of a Process

do {

entry section // Request permission to enter

critical section

exit section // Announce departure

remainder section

} while (TRUE);

Three Requirements for a Solution

- Mutual Exclusion: If process is executing in its critical section, no other processes can be executing in their critical sections.

- Progress: If no process is executing in its critical section and some processes wish to enter, only those processes not in their remainder sections can participate in deciding which will enter next. The selection cannot be postponed indefinitely.

- Bounded Waiting: There must be a limit (bound) on the number of times other processes are allowed to enter their critical sections after a process has made a request to enter its critical section and before that request is granted.

3. Synchronization Solutions

A. Classical Two-Process Solution (Peterson’s Solution)

A software-based solution restricted to two processes ( and ) that alternate execution. It uses two shared variables:

int turn: Indicates whose turn it is to enter.boolean flag[2]: Indicates if a process is ready to enter.

// Code for Process Pi

do {

flag[i] = true;

turn = j;

while (flag[j] && turn == j); // Busy wait

/* CRITICAL SECTION */

flag[i] = false;

/* REMAINDER SECTION */

} while (true);

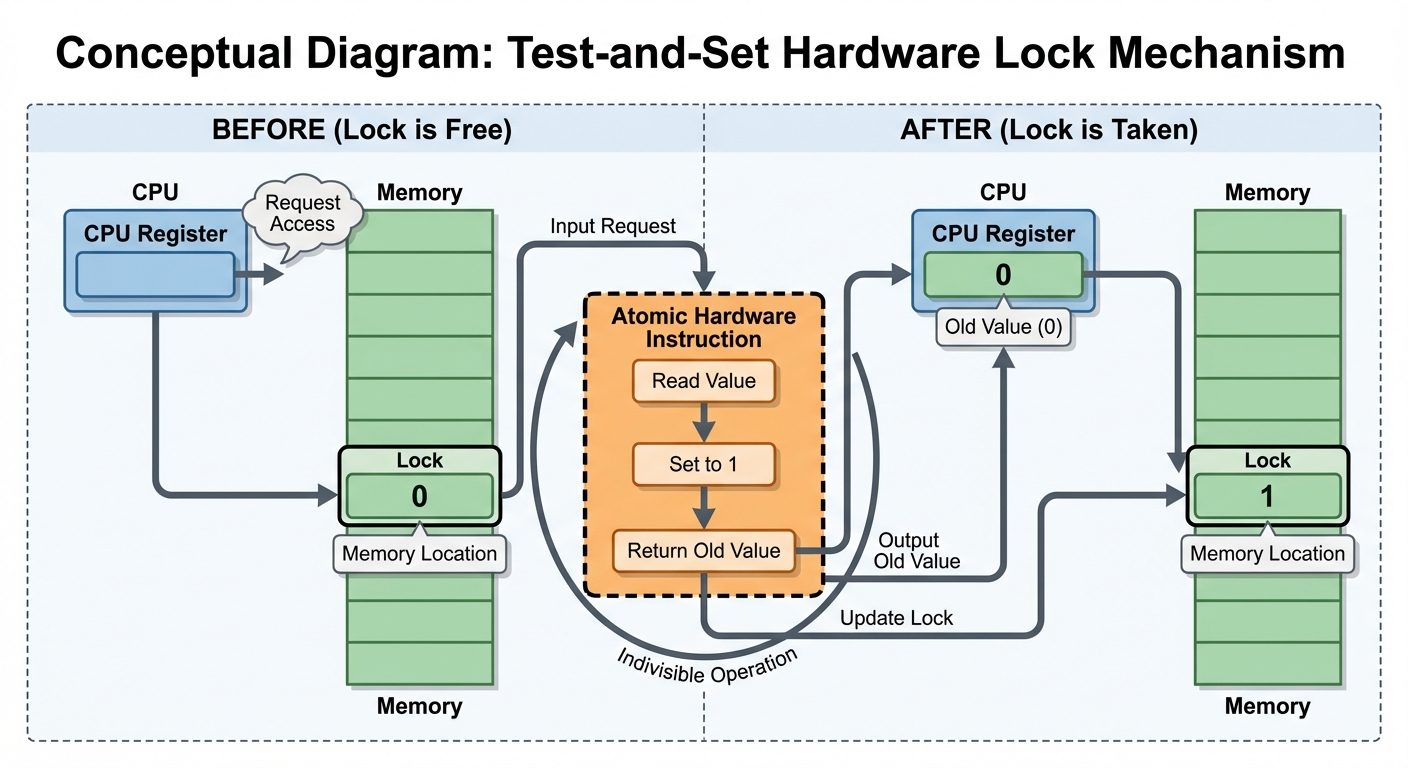

B. Hardware Primitives

Hardware instructions provide atomic (uninterruptible) operations to solve synchronization.

- TestAndSet:

- Reads a boolean value and sets it to TRUE atomically.

- Used to implement a lock.

- Swap (CompareAndSwap):

- Swaps the contents of two words atomically.

4. Semaphores

A Semaphore is a robust synchronization tool (an integer variable, ) that, apart from initialization, is accessed only through two standard atomic operations: wait() and signal() (historically P() and V()).

Operations

- Wait (P): Decrements the semaphore value. If the value becomes negative, the process blocks.

Cwait(S) { while (S <= 0); // Busy wait (in basic implementation) S--; } - Signal (V): Increments the semaphore value. If processes are waiting, one is woken up.

Csignal(S) { S++; }

Types of Semaphores

- Counting Semaphore: The integer value can range over an unrestricted domain. Used to control access to a resource with finite instances (e.g., 5 printers).

- Binary Semaphore (Mutex Lock): The integer value ranges only between 0 and 1. Used to solve the mutual exclusion problem.

Implementation Issues

- Busy Waiting (Spinlock): The process loops continuously while waiting. Wastes CPU cycles.

- Block/Wakeup: Better implementation uses a waiting queue. Instead of spinning, the process blocks itself and is placed in a queue associated with the semaphore.

5. Classical Problems of Synchronization

A. Producer-Consumer Problem (Bounded Buffer)

- Scenario: A Producer creates items and puts them into a fixed-size buffer. A Consumer takes items out.

- Constraints: Producer must wait if the buffer is full. Consumer must wait if the buffer is empty.

- Solution: Uses three semaphores:

mutex(binary, for buffer access),empty(counting, tracks empty slots),full(counting, tracks filled slots).

B. Reader-Writer Problem

- Scenario: A database is shared among several concurrent processes.

- Readers: Only read the data (multiple readers allowed simultaneously).

- Writers: Read and write data (exclusive access required).

- Problem: If a writer is writing, no other process (reader or writer) can access the database.

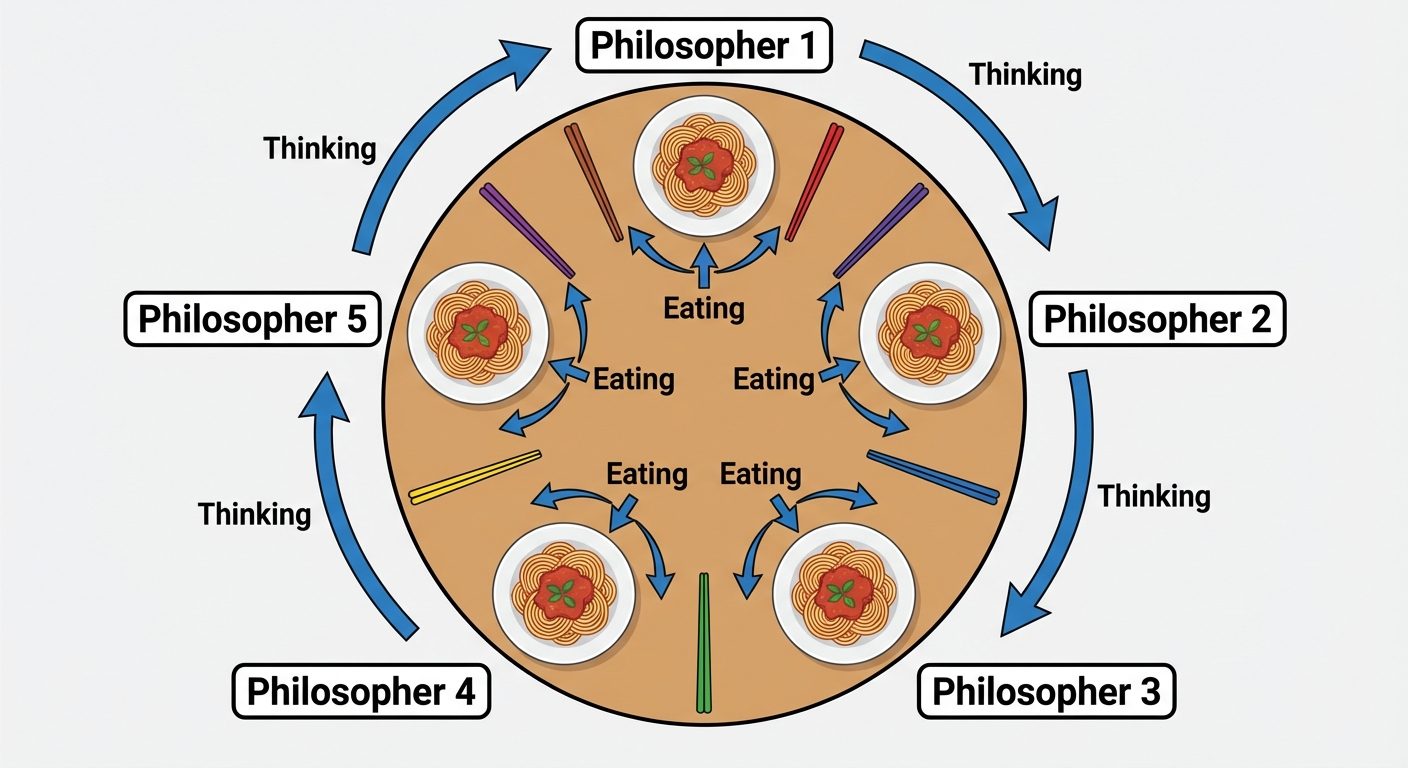

C. Dining Philosophers Problem

- Scenario: philosophers sit at a round table with a bowl of rice. A chopstick is placed between each pair of adjacent philosophers.

- Rules: A philosopher needs two chopsticks to eat (left and right). They cannot pick up both simultaneously.

- Problem: Deadlock occurs if all philosophers pick up their left chopstick simultaneously. Starvation occurs if a philosopher never gets both chopsticks.

6. Monitors

Semaphores are effective but error-prone (e.g., forgetting a signal()). A Monitor is a high-level abstraction (available in languages like Java/C#) that provides a convenient and effective mechanism for process synchronization.

Characteristics

- ADTs: Monitors are Abstract Data Types.

- Shared Data: Variables are defined inside the monitor and can only be accessed by procedures within the monitor.

- Mutual Exclusion: Only one process can be active within the monitor at a time (guaranteed by the compiler/OS).

- Condition Variables: Used for synchronization within the monitor (

condition x, y;).x.wait(): Suspend execution of the calling process until another process callsx.signal().x.signal(): Resume one suspended process.

7. Threads

Overview

A Thread is a basic unit of CPU utilization (also called a lightweight process). It comprises:

- Thread ID

- Program Counter

- Register set

- Stack

It shares with other threads belonging to the same process:

- Code section

- Data section

- OS resources (open files, signals)

Benefits of Multithreading

- Responsiveness: An interactive application can continue running even if part of it is blocked.

- Resource Sharing: Threads share memory and resources of the process by default.

- Economy: Creating threads is faster than creating processes (less overhead).

- Scalability: Threads can run in parallel on multiprocessor architectures.

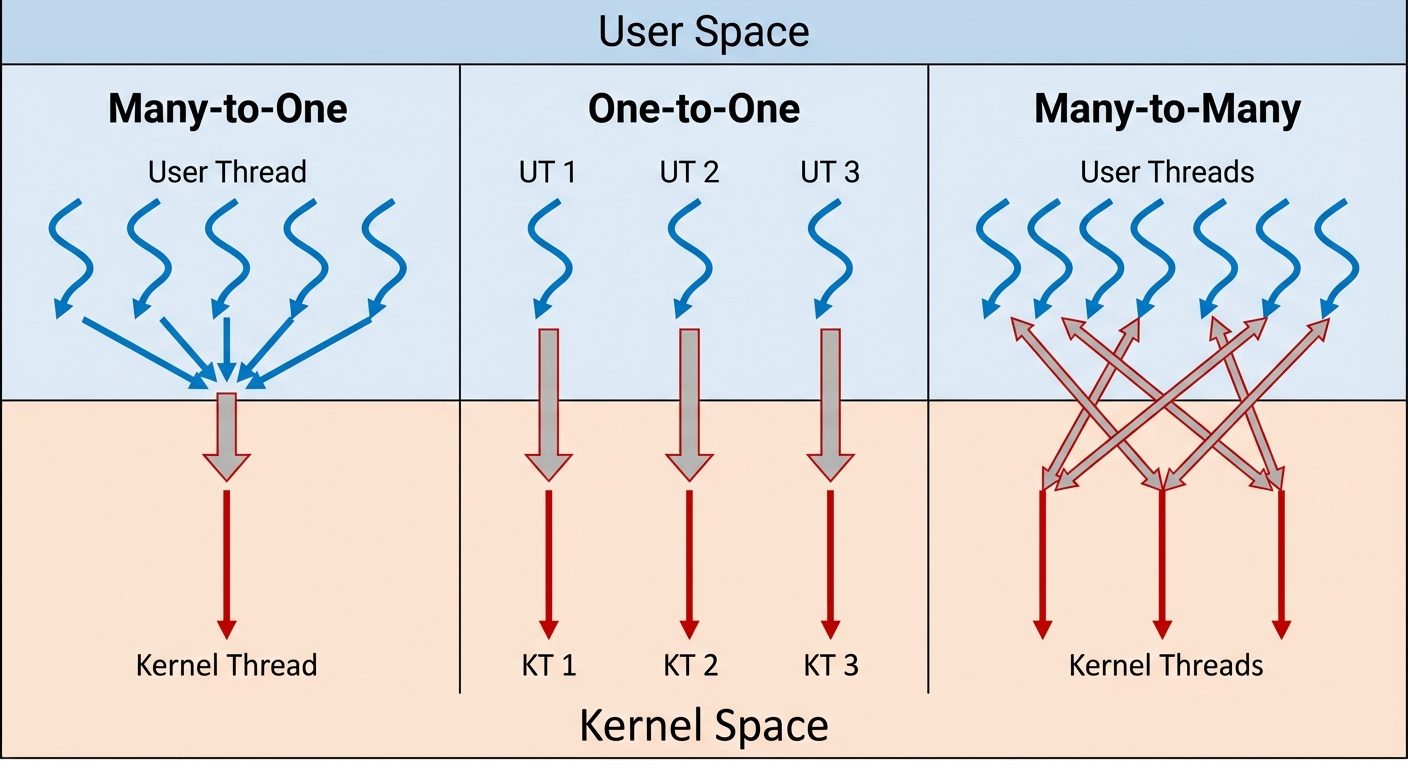

Multithreading Models

How user-level threads relate to kernel-level threads.

-

Many-to-One Model:

- Many user-level threads map to a single kernel thread.

- Efficient, but if one blocks, the entire process blocks.

- Example: Solaris Green Threads.

-

One-to-One Model:

- Each user-level thread maps to a kernel thread.

- More concurrency; blocking one doesn't block others.

- Overhead of creating kernel threads.

- Example: Windows, Linux.

-

Many-to-Many Model:

- Multiplexes many user-level threads to a smaller or equal number of kernel threads.

- Best of both worlds (flexible).

Scheduler Activations

To communicate between the user-thread library and the kernel, systems use an intermediate data structure (Lightweight Process - LWP).

- Scheduler Activation: The kernel provides a set of virtual processors (LWPs) to the application. The application can schedule user threads onto these available LWPs.

- Upcall: A mechanism where the kernel informs the application about certain events (like a thread blocking).

Example: Threaded Program (Pthreads in C)

#include <pthread.h>

#include <stdio.h>

int sum; /* this data is shared by the thread(s) */

void *runner(void *param); /* threads call this function */

int main(int argc, char *argv[]) {

pthread_t tid; /* the thread identifier */

pthread_attr_t attr; /* set of thread attributes */

/* set the default attributes of the thread */

pthread_attr_init(&attr);

/* create the thread */

pthread_create(&tid, &attr, runner, argv[1]);

/* wait for the thread to exit */

pthread_join(tid, NULL);

printf("sum = %d\n", sum);

}

/* The thread will execute in this function */

void *runner(void *param) {

int i, upper = atoi(param);

sum = 0;

for (i = 1; i <= upper; i++)

sum += i;

pthread_exit(0);

}