Unit 2 - Notes

Unit 2: Artificial Intelligence & Machine Learning

1. Introduction to Core Concepts

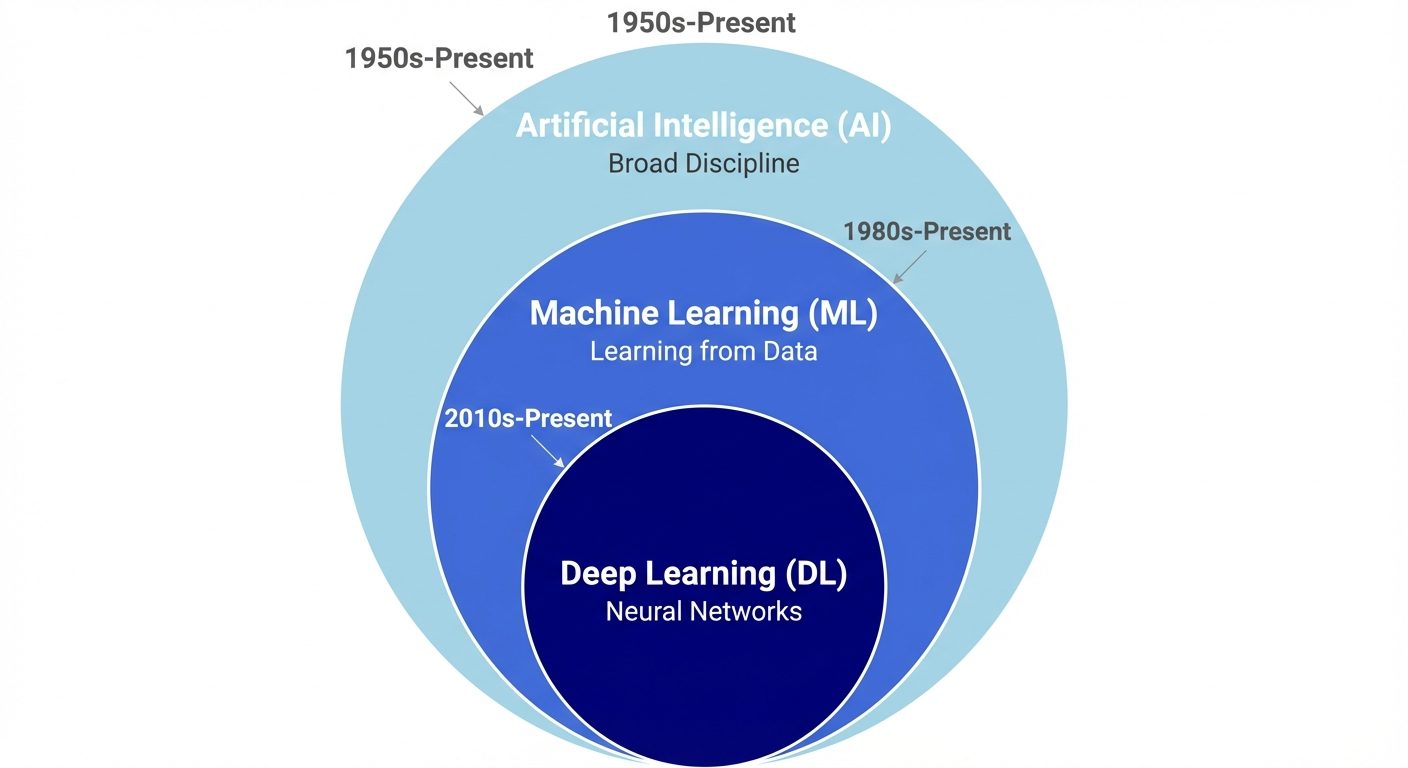

1.1 Artificial Intelligence (AI)

Artificial Intelligence is the simulation of human intelligence processes by machines, especially computer systems. These processes include learning (the acquisition of information and rules for using the information), reasoning (using rules to reach approximate or definite conclusions), and self-correction.

- Goal: To create systems capable of performing tasks that typically require human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages.

- Types of AI:

- ANI (Artificial Narrow Intelligence): Specialized in one task (e.g., Siri, Chess bots). Current state of AI.

- AGI (Artificial General Intelligence): Machines capable of understanding the world as well as a human. Theoretical.

- ASI (Artificial Super Intelligence): Machines surpassing human intelligence. Theoretical.

1.2 Machine Learning (ML)

Machine Learning is a subset of AI that focuses on the development of algorithms that enable computers to learn from and make predictions or decisions based on data. Instead of being explicitly programmed to perform a task, the machine "learns" from training data.

- Key Paradigm: Input Data + Output Data Algorithm (Rules).

- Types of ML:

- Supervised Learning: Learning with labeled data (e.g., classifying emails as spam or not spam).

- Unsupervised Learning: Learning with unlabeled data (e.g., customer segmentation).

- Reinforcement Learning: Learning through trial and error based on rewards/punishments.

1.3 Deep Learning (DL)

Deep Learning is a specialized subset of ML capable of learning unsupervised from data that is unstructured or unlabeled. It is inspired by the structure and function of the human brain called artificial neural networks.

- Architecture: Uses multi-layered neural networks (hence "Deep").

- Application: Image recognition, Natural Language Processing (NLP), and sophisticated game playing (e.g., AlphaGo).

2. Specialized Systems and Paradigms

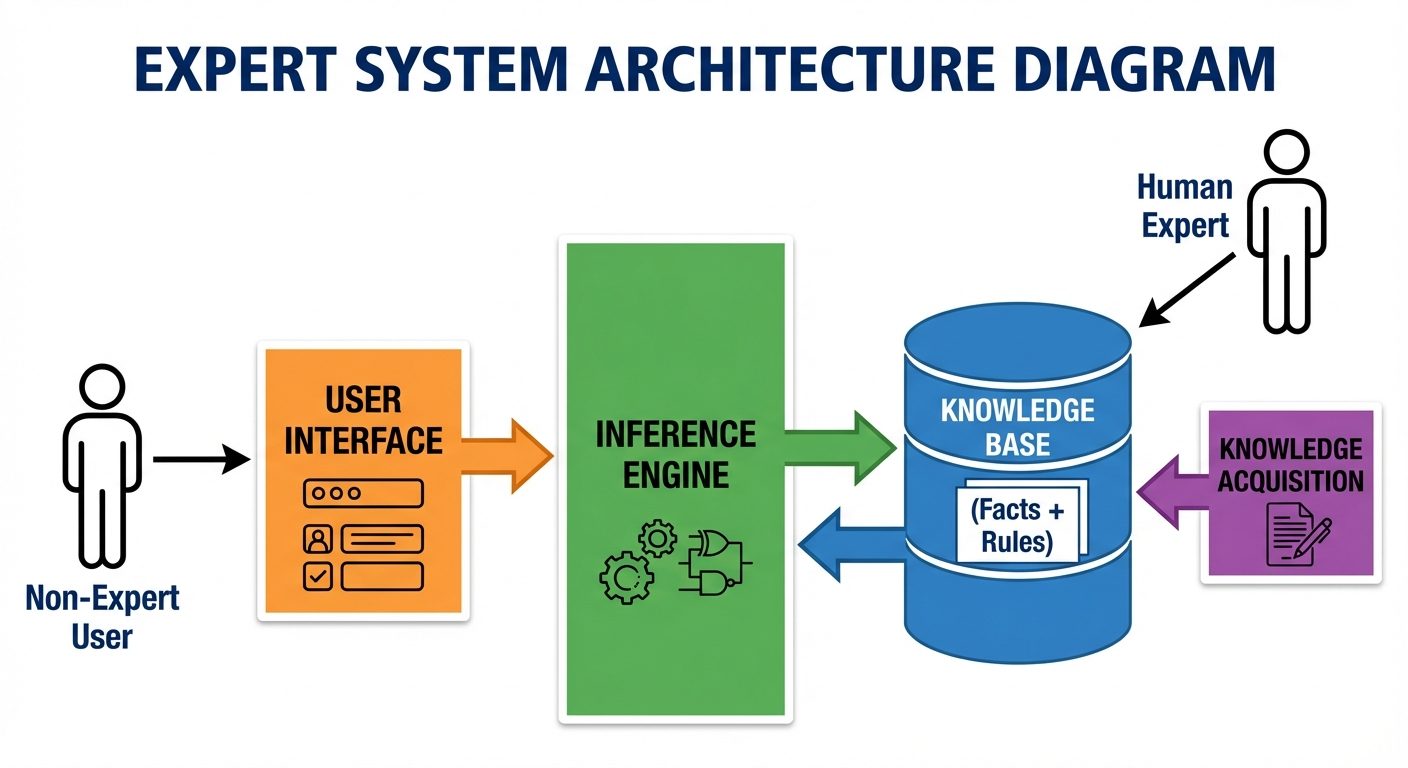

2.1 Expert Systems

An expert system is a computer program that simulates the judgment and behavior of a human or an organization that has expert knowledge and experience in a particular field.

- Components:

- Knowledge Base: Contains specific, high-quality knowledge (facts and rules).

- Inference Engine: The brain of the system; applies rules to the knowledge base to deduce new information.

- User Interface: Allows non-expert users to query the system.

- Example: MYCIN (early medical diagnosis system).

2.2 Fuzzy Systems (Fuzzy Logic)

Fuzzy logic acts like human reasoning, where the truth of a statement can be a continuum between 0 and 1, rather than just True (1) or False (0).

- Concept: Handles concept of "partial truth."

- Application: Used in control systems (e.g., washing machines determining load weight and dirtiness, anti-lock braking systems).

- Crisp Set vs. Fuzzy Set: Crisp is binary (Hot/Cold); Fuzzy allows degrees (Freezing, Cold, Warm, Hot, Scorching).

2.3 Augmented Reality (AR)

While often categorized under computer vision/graphics, AR relies heavily on AI for object recognition and depth tracking. It is an interactive experience of a real-world environment where the objects that reside in the real world are enhanced by computer-generated perceptual information.

- AI Role in AR: Simultaneous Localization and Mapping (SLAM), object detection, and lighting estimation to make digital objects look realistic.

- Example: Pokémon GO, IKEA Place app.

3. Use of AI in Different Fields

3.1 Natural Language Processing (NLP)

Interaction between computers and human language.

- Tasks: Sentiment analysis, language translation, chatbots, text summarization.

- Impact: Breaking language barriers and automating customer support.

3.2 Healthcare

- Diagnostics: Analysis of X-rays, CT scans, and MRIs to detect tumors or fractures faster than human radiologists.

- Drug Discovery: Predicting molecular behavior to speed up pharmaceutical development.

- Personalized Medicine: Tailoring treatment plans based on genetic data.

3.3 Agriculture (Precision Farming)

- Crop Monitoring: Using drones and computer vision to monitor crop health.

- Predictive Analytics: Forecasting weather patterns and crop yields.

- Robotics: Automated harvesting and weed control.

3.4 Social Media Monitoring

- Content Moderation: Automatically detecting hate speech, violence, or explicit content.

- Recommendation Engines: Algorithms (e.g., TikTok, YouTube) that analyze user behavior to suggest content.

- Sentiment Analysis: Brands monitoring public perception based on tweets and posts.

4. Real-World Implementations & Case Studies

4.1 Google Translator

- Technology: Originally Phrase-Based Machine Translation (PBMT), now uses Google Neural Machine Translation (GNMT).

- Mechanism: Uses deep learning (Recurrent Neural Networks or Transformers) to translate whole sentences at a time rather than piece-by-piece, preserving context and grammar.

4.2 Driverless Cars (Autonomous Vehicles)

- Key Technologies: Computer Vision, LiDAR (Light Detection and Ranging), Sensor Fusion.

- AI Function:

- Perception: Identifying lane lines, pedestrians, and traffic signs.

- Prediction: Predicting the movement of other objects.

- Planning: deciding the path and speed.

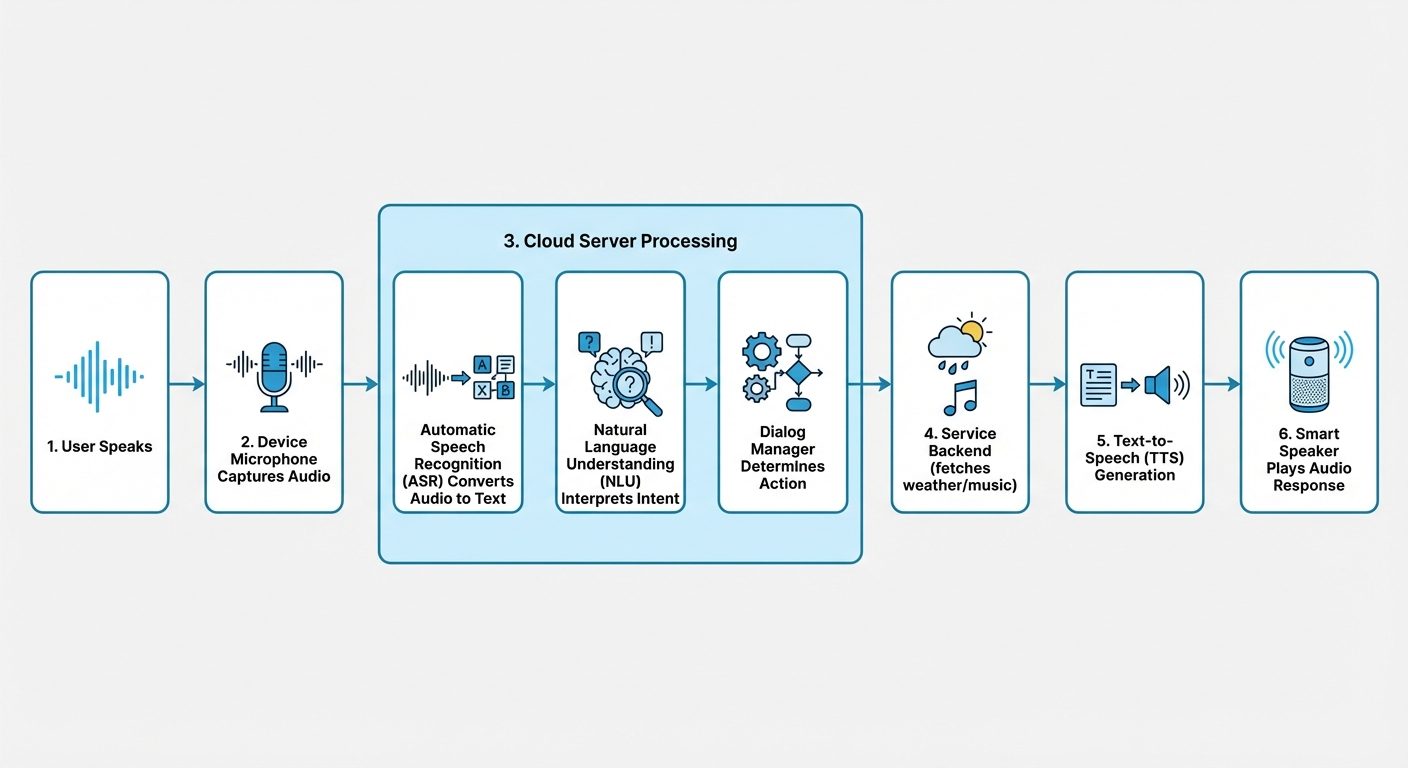

4.3 Alexa & Siri (Voice Assistants)

- Workflow:

- Wake Word Detection: Local processing detects "Alexa" or "Hey Siri".

- Speech Recognition (ASR): Converts audio to text.

- Natural Language Understanding (NLU): Determines the user's intent (e.g., "Play music").

- Text-to-Speech (TTS): Converts the response back to audio.

4.4 ChatGPT

- Model: Large Language Model (LLM), specifically GPT (Generative Pre-trained Transformer).

- Mechanism: Trained on massive amounts of text data to predict the next word in a sequence. It uses "Attention Mechanisms" to understand context over long conversations.

- Type: Generative AI (creates new content rather than just analyzing existing content).

5. Tools and Techniques for Implementation

5.1 Techniques (Algorithms)

- Regression: Predicting continuous values (e.g., house prices).

- Classification: Predicting categories (e.g., Cat vs. Dog).

- Clustering: Grouping similar data points (e.g., K-Means).

- Neural Networks: Layers of nodes simulating neurons for complex pattern recognition.

5.2 Popular Tools & Libraries

- Python: The dominant programming language for AI due to simplicity and library support.

- TensorFlow (Google): End-to-end open-source platform for machine learning.

- PyTorch (Facebook/Meta): flexible deep learning framework, popular in research.

- Scikit-learn: Simple and efficient tools for predictive data analysis (classic ML).

- OpenCV: Library for real-time computer vision.

6. Current Trends, Opportunities, and Careers

6.1 Current Trends

- Generative AI: Explosion of tools like Midjourney (images) and GPT-4 (text).

- Edge AI: Running AI algorithms locally on devices (phones, IoT) rather than the cloud for privacy and speed.

- Explainable AI (XAI): Making AI decisions transparent and understandable to humans.

- AI Ethics: Focus on bias reduction, deepfake detection, and data privacy.

6.2 Job Roles

- Data Scientist: Analyzes complex data to help organizations make decisions.

- Machine Learning Engineer: Designs and builds the AI models and systems.

- AI Research Scientist: Invents new algorithms and approaches (usually requires PhD).

- NLP Engineer: Specializes in language-related AI applications.

- AI Ethicist: Ensures AI systems are fair, transparent, and compliant with laws.

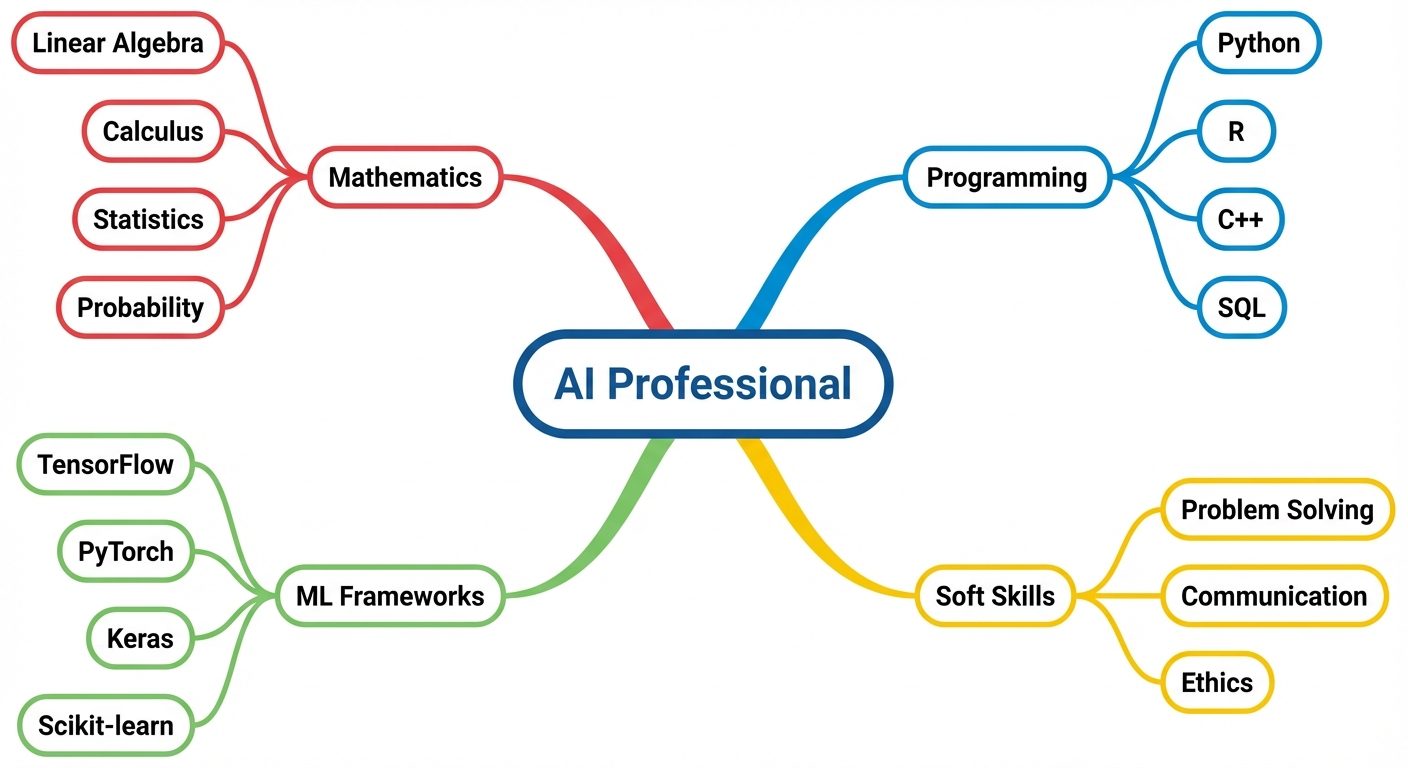

6.3 Required Skillset

- Technical: Python/R programming, Linear Algebra, Calculus, Probability, Cloud Computing (AWS/Azure).

- Soft Skills: Problem-solving, critical thinking, domain expertise (e.g., understanding finance if building Fintech AI).